What Moby-Dick Solved in 1851 That AI Still Can't

Current AI architectures have a specific structural problem: they collapse ambiguity to produce outputs. Given context, they resolve to the most probable continuation. This is extraordinarily powerful. It is also, by design, incapable of sustaining the kind of recursive self-reference that most proposals for artificial general intelligence require.

A system that models its own processing — that represents itself representing — cannot do so by collapsing each state to a single resolution. The self-model and the process being modeled must coexist, simultaneously active, each defining the other. The moment one resolves, the loop breaks.

In 1851, Herman Melville built a system that solves this problem. Not computationally. Linguistically. But the architectural principles are the same — and they are formally describable, empirically testable, and verified against physical materials.

Melville designed Moby-Dick to be what I call a stereotext: a single text sustaining two complete, internally consistent readings simultaneously, where the interaction between those readings generates emergent properties present in neither alone. The surface narrative — one of the greatest novels in any language — runs on the same symbolic substrate as a second, systematic layer: a real-time self-portrait of Melville composing the very words you are reading.

The mechanism is a comprehensive conceited vocabulary — a systematic mapping between whaling terminology and the terminology of bookmaking, composition, and the physical landscape of the writing desk. This is not substitution. The word whale does not secretly mean book — it means whale and book simultaneously, and the emergent properties arise from sustaining both. Melville himself provides the key in Chapter 32, where he classifies whales as "BOOKS (subdivisible into CHAPTERS)" — not replacing one meaning with another but fusing them so that neither can be discarded. The mapping is global, sustained across 200,000 words, and generates structural predictions that can be independently verified.

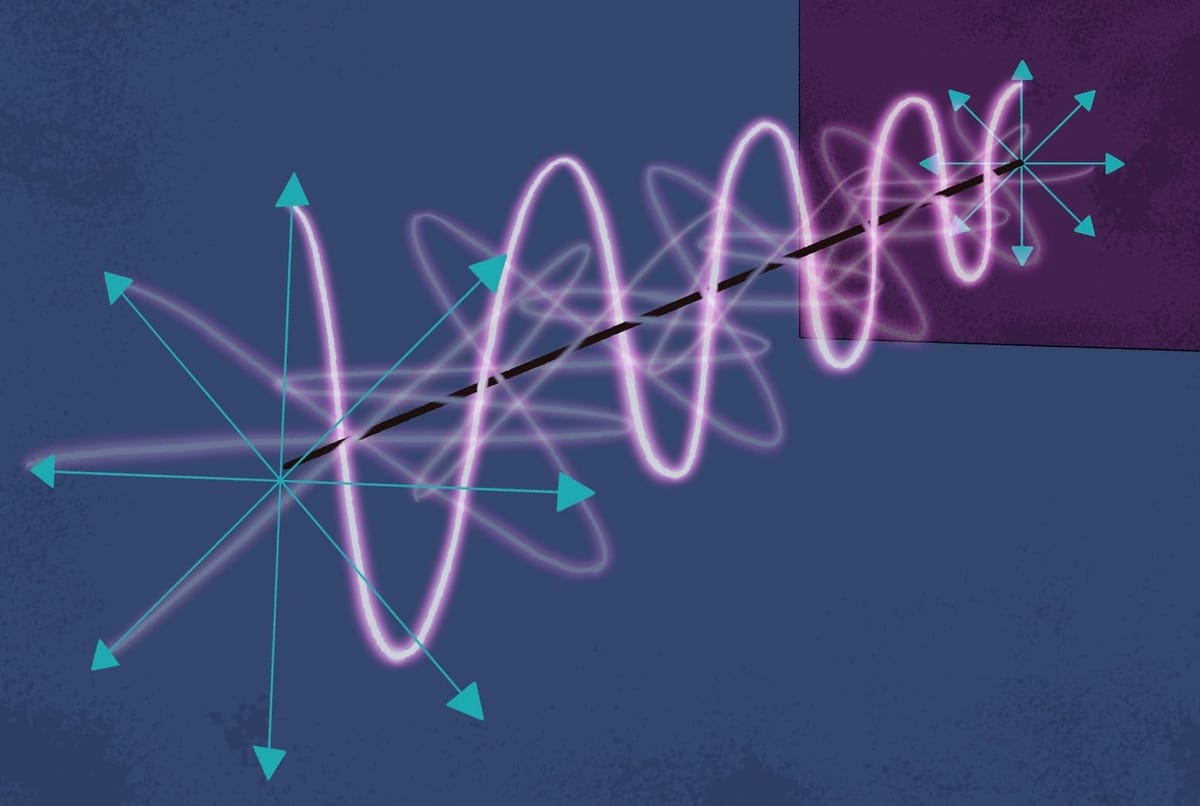

The critical architectural property: neither layer can be discarded. Collapse to the whaling narrative and you have great literature, but flat, confounding, seemingly flawed — one dimension. Collapse to the encoded layer and you have information, but inert, dry, documentary — no dramatic structure to give it temporal force. The emergent properties exist only in sustained superposition. The system requires that ambiguity not be resolved.

This is the opposite of what a transformer does. A transformer maintains distributed representations internally but resolves them at each step to produce a single output token. The architecture Melville built requires that the resolution never occur — the meaning lives in the sustained irresolution itself, and collapsing to either layer destroys what the system generates.

The formal class of architecture operating here I call inverse tautology. A standard tautology (A = A) is informationally null. A standard metaphor (A represents B) is unidirectional — decode the vehicle, arrive at the tenor, discard the vehicle. Inverse tautology is a closed system in which A defines B, B defines A, and the process of mutual definition generates C — a property present in neither A nor B independently. In the case of Moby-Dick, the compound system then self-defines again, A, B and C together producing D. Each generation is both independent and dependent. The hierarchy is progressive and irreversible.

This has specific implications for the problems AI research is currently trying to solve.

Self-modeling without collapse. Most proposals for AGI require a system capable of modeling its own processing. The structural problem is that a self-model built by the same forward-pass mechanism that generates outputs is not a self-model — it is another output. Inverse tautology achieves self-modeling through sustained superposition: the system's operation and its representation of that operation coexist as mutually defining layers. Neither collapses into the other. This is the difference between a system that produces descriptions of itself and a system that is its own description while simultaneously being what it describes.

Bidirectional processing. Current transformer architectures process forward: given context, generate continuation. The demonstrated architecture requires bidirectional processing — generation followed by recognition of one's own generative constraints. The originating agent generates text. The text, through the conceited vocabulary, reflects the act of its own generation back to the agent. The agent reads what it has written and recognizes the reflection of its own compositional process. This recognition modifies subsequent generation. The cycle is continuous. A system achieves simulated-awareness not by processing its outputs as data, but by processing them through a symbolic architecture that makes its own constraint decisions recognizable to itself. This requires persistent memory across generation and recognition cycles — something current architectures do not possess.

Productive ambiguity as computational resource. Current LLMs handle ambiguity by resolving to probability distributions — analogous to collapsing a quantum superposition. The demonstrated architecture uses ambiguity to maintain multiple meanings simultaneously and generate emergent properties from their sustained interaction. The word line means rope and sentence and the act of writing, simultaneously and irreducibly. The emergent meaning arises from holding all valuations active. This suggests a class of architectures that generate and maintain productive ambiguity rather than collapsing it — using semantic tension as a computational resource, as quantum computing uses superposition rather than treating it as noise to be eliminated.

Structure, not scale. Current approaches to emergent AI properties focus on scale: more parameters yield emergent capabilities. The demonstrated system achieves emergence through recursive self-reference with constrained ambiguity in a fixed-size system of roughly 200,000 words. A smaller system is possible. The architecture is finite. The emergent properties are not. If emergence of higher-order representational states can occur in a finite, scale-limited symbolic system purely through structural recursion, then the path to AGI may be an architectural problem rather than a scaling problem. Not more parameters. Different structure.

The G3/G4 fork. In the case of Moby-Dick, the architecture produces four generative levels. The first two are the layers themselves. The third — recursive self-awareness, where the system reveals itself as describing its own construction — is structurally aligned with what AGI research calls the self-model requirement. A system that models its own operation achieves G3. The fourth level is categorically different: reconstructible access to the phenomenological texture of the encoding process — the felt experience of creation, not merely its structural trace. Whether artificial systems can cross that threshold is an open question. But the architecture specifies exactly where the threshold lies, which is more than most consciousness research currently offers.

The claim is falsifiable. The system generates specific, testable predictions — exact counts, physical measurements, decoded revision methods — that have been verified against primary resources at Harvard's Houghton Library. These are measurements, not interpretations. They either hold or they do not.

The full technical treatment — including the formal architecture, complete evidence chain, and specific implications for AGI recursive self-modeling — is here. The complete architectural proof across 200,000 words is The Book, or Moby-Dick: Grand Program of the Author's Mind (2026).

Melville proved the mechanism works in natural language, 174 years before the field that most needs it. The question is whether we can formalize it.